The scene of cosmology, a modern scientific discipline that studies the universe through astronomy, physics and chemistry, has been thriving for the past few decades.

We now have a comprehensive and descriptive model of cosmology from astronomical full-sky surveys and land experiments in particle physics. We know that hypothetically dark matter exists because of the gravitational effects it appears to have on galaxy clusters. (Interested to know what dark matter is and why it matters? Check out this article here!)

We also know that the universe is expanding at an increasing rate, likely caused by a mysterious force called dark energy.

But ironically, the more we know about the vast universe, the more we..don’t know. There are still so many surprises in store and much to be unearthed.

What mysteries will be revealed with the highly anticipated arrival of exascale supercomputers. Buckle up, cosmo-geeks!

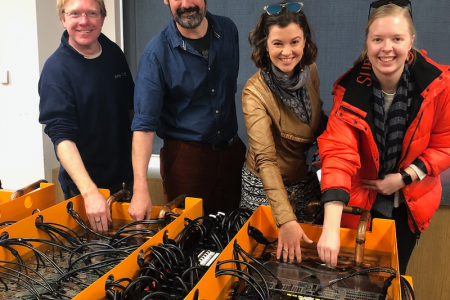

The Aurora supercomputer.

One of the world’s first-ever exascale computers will be delivered later this year. Housed at the Argonne National Laboratory, the supercomputer, dubbed Aurora, will be contributing to the revolution in cosmological research.

The supercomputer will be built on a future generation of Intel Xeon Scalable “Sapphire Rapids” processors, accelerated by Intel’s Xe arch-based “Ponte Vecchio” GPU architecture. Geek out on the computer’s specifications here!

Led by Dr Salman Habib, director of Argonne’s Computational Science Division and an Argonne Distinguished Fellow, the cosmology team at Argonne are preparing to evolve and run their Hardware/Hybrid Cosmology Code (HACC) on Aurora and the nation’s other upcoming exascale systems.

The capabilities of the code are also currently being extended by the ExaSky team, a collaboration between Argonne National Laboratory, Los Alamos National Laboratory and Lawrence Berkeley National Laboratory, to efficiently utilise exascale resources as they become available.

Shining some light on the dark stuff.

Through observations of the universe, it is confirmed that the universe is expanding and the expansion rate is increasing with time. Scientists ascribe this phenomenon to the effect of “dark energy”, but the underlying cause is still not well fathomed.

Furthermore, current models of cosmology, which comprise ingredients such as dark energy and dark matter, provide a very good description of astronomical and cosmological observations. But small discrepancies in these models do exist, which requires addressing.

Dr Habib’s research, which will be turbocharged by the computing prowess of Aurora, aims to eliminate the lack of understanding and discrepancies in these areas by extending existing cosmological simulation codes, such as the HACC, to work on exascale platforms.

Ascertaining these discrepancies can either be a bad thing or a good thing.

On the one hand, they could just be the result of measurement artefacts as cosmological measurements are often complicated and tough to do.

But on the other hand, some of the discrepancies could potentially indicate new physics, which could lead to a gripping series of pivotal moments that advance our understanding of the universe. The new knowledge could further examine the nature of dark matter and unravel brand new aspects of the fundamental physics of matter and its interactions.

Still, the advent of exascale computing will undoubtedly revolutionise the field of cosmology to offer greater insight into the origin, evolution and content of the universe.